What Is Nano Banana Pro? 2026 Buyer’s Guide for Real Marketing Teams

If you are searching “what is Nano Banana Pro”, you probably want a direct answer before wasting time:

- Is this just another AI image toy?

- Is it usable for paid ads and business workflows?

- Is it better for teams than generic image generators?

- Who should actually pay for it, and who should not?

Short answer: Nano Banana Pro is a production-oriented AI image workflow layer designed for teams that care about usable output speed, not just pretty one-off generations.

This page is a buyer-style guide, not a model fan page.

Quick Answer (One-Minute Version)

Nano Banana Pro is best for:

- performance marketing teams shipping creatives weekly,

- ecommerce teams producing product visuals at volume,

- founders/operators who need output now, not design theory,

- agencies balancing speed, consistency, and client approval.

Nano Banana Pro is not best for:

- pure art exploration with no business output target,

- teams without a prompt structure or review process,

- users expecting one-click perfection with zero iteration.

If your KPI is revenue-linked creative throughput, this tool category makes sense.

What “Pro” Actually Means in Practice

Many tools use “Pro” as a pricing label. Here, “Pro” should be interpreted as workflow behavior:

-

Higher usable-output ratio

- More generations can move toward publish-ready assets.

-

Faster iteration loops

- Shorter cycles from brief → variants → approval candidate.

-

Better campaign consistency

- Visual direction can stay coherent across multiple asset sets.

-

Operational fit

- Easier handoff between marketer, designer, operator, and reviewer.

In other words, Pro value is operational, not cosmetic.

Core Capabilities Buyers Usually Care About

1) Prompt controllability

You can guide composition, tone, background context, focal hierarchy, and campaign intent more predictably than casual prompting workflows.

2) Variant velocity

For growth teams, the win is not one “perfect” output. The win is generating multiple viable variants quickly enough for testing windows.

3) Production consistency

Consistency matters when you need channel packs:

- 1:1 feed,

- 4:5 social,

- 9:16 short video cover style.

4) Team repeatability

With template prompts and review rules, teams can reduce random quality swings.

Who Should Use Nano Banana Pro (and Who Shouldn’t)

Best-fit users

- Performance marketers: need CTR/CVR-driven creative cycles.

- Ecommerce teams: product-heavy visual production under deadlines.

- Growth operators: need fast, cost-controlled experimentation.

- Small agencies: client output cadence requires repeatability.

Weak-fit users

- Hobby users with no production goal.

- Teams without defined visual standards.

- Teams unwilling to run quality review before publish.

If you are not ready to standardize prompts and approval, tool upgrades won’t fix your process.

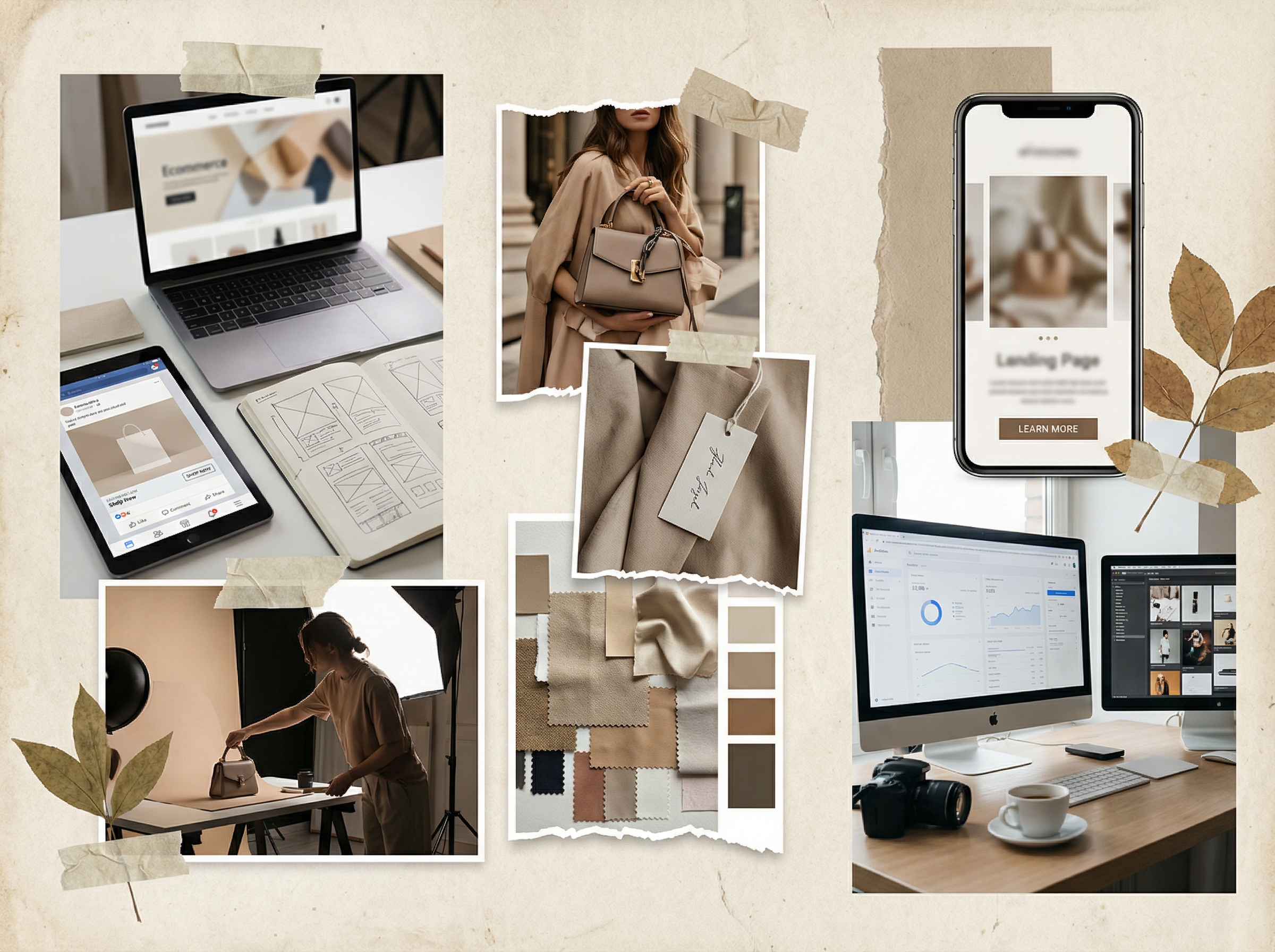

Common Use Cases That Convert

Use Case A: Paid Social Creative Batches

Goal: produce 8-20 variants/week for angle testing.

What matters:

- speed to first usable output,

- text-safe composition space,

- clear product focus.

Use Case B: Ecommerce Product Content

Goal: maintain volume and consistency for launches and promotions.

What matters:

- product detail fidelity,

- brand-safe style control,

- low revision overhead.

Use Case C: Landing Page Visual Refresh

Goal: refresh hero and support visuals without full photoshoot cycles.

What matters:

- message-visual alignment,

- trust/clarity tone,

- fast deployment cadence.

What Nano Banana Pro Is Not (Important)

To avoid wrong expectations:

- It does not remove the need for strategy.

- It does not eliminate legal/compliance review.

- It does not guarantee universal brand correctness without constraints.

- It does not replace every design workflow in enterprise pipelines.

Think of it as a creative acceleration layer, not a full business replacement stack.

Decision Lens: Pro vs Generic Image Tools

Use this buyer lens instead of feature hype.

| Decision Dimension | Generic Tools | Nano Banana Pro Style Workflow |

|---|---|---|

| First-pass usability | Variable | Typically more controlled |

| Iteration speed | Often uneven | Usually better for campaign loops |

| Team handoff readiness | Lower | Higher with template process |

| Output consistency | Unstable without heavy manual work | Better under structured prompts |

| Business workflow fit | Mixed | Stronger for recurring production |

This is why operators often choose “boring reliability” over aesthetic novelty.

7-Day Qualification Framework (Do This Before Committing)

If you want a no-BS decision, run this test:

Setup

- 1 product line or offer family

- 3 campaign angles

- 3 formats (1:1, 4:5, 9:16)

- same review rubric for all outputs

Track metrics

- publish-ready ratio,

- average revisions per approved asset,

- time to first usable output,

- team handoff friction,

- consistency score across variant sets.

Decision rule

If this workflow reduces CPAA (cost per approved asset) and improves cadence, it is worth it.

Directional Benchmark Snapshot

Below is a directional internal benchmark format (example structure for decision-making):

| Metric | Structured Pro Workflow |

|---|---|

| Publish-ready ratio | 58-66% range |

| Avg revisions per approved asset | 1.9-2.4 |

| Time to first usable output | 10-15 min |

| Major rework rate | 20-30% |

| Handoff friction (1-5, lower better) | 1.8-2.4 |

Use this as a framework; your exact numbers depend on prompt quality and review standards.

Pricing Fit: When Pro Is Worth Paying For

You usually get value from Pro-tier workflows when:

- your team ships assets every week,

- delay has direct campaign cost,

- you need multiple variants per brief,

- two or more contributors work in parallel,

- creative output affects revenue targets.

You may not need Pro yet when:

- you generate occasionally,

- deadlines are soft,

- output volume is low,

- you are still validating basic use cases.

For plan detail context, use Pricing.

Upgrade Triggers (Use as a Rule)

Upgrade when 2+ triggers persist:

- quota pressure appears before weekly cycle ends,

- team members queue for generation access,

- revision loops become the bottleneck,

- experiments are delayed to “save credits,”

- campaign launch quality drops under deadline stress.

If these are visible, under-capacity is already costing you.

Downgrade Triggers

Downgrade when:

- usage stays below half capacity for 6+ weeks,

- campaign frequency drops,

- team composition shrinks,

- CPAA remains healthy under lower-load assumptions.

Smart operations include controlled downgrades too.

Why Teams Fail Even With Good Tools

Most failures come from workflow mistakes, not model limitations:

- no standardized prompt templates,

- no approval checklist,

- no channel-specific visual rules,

- no ownership for final signoff,

- no tracking of CPAA/throughput metrics.

Tool quality amplifies process quality. It cannot substitute for it.

What “Good” Implementation Looks Like

A practical implementation stack:

Layer 1: Prompt system

- fixed template fields,

- prohibited element list,

- channel-specific format presets.

Layer 2: Review system

- first-pass quality gate,

- claim/compliance check,

- one accountable reviewer.

Layer 3: Metrics system

- publish-ready ratio,

- revision burden,

- CPAA,

- weekly throughput.

Teams that implement all three layers get repeatable gains.

30-Day Rollout Plan (Copy/Paste)

Week 1: Baseline

- measure current creative cycle time,

- define approval checklist,

- set prompt template v1.

Week 2: Controlled production

- run one campaign family through Nano Banana Pro workflow,

- collect usability and revision metrics.

Week 3: Optimization

- refine prompt templates,

- tighten reviewer criteria,

- reduce retry waste.

Week 4: Decide

- compare old vs new CPAA and throughput,

- keep, scale, or rollback based on data.

This avoids hype-driven decisions.

Checklist: Should You Use Nano Banana Pro?

- I publish commercial visuals weekly.

- Creative delay has measurable business cost.

- I need multiple variants per campaign.

- I have at least one defined review process.

- I can measure approved output, not just raw generation count.

- I care about consistency across channels.

If you checked 4+ items, this is likely a strong fit.

FAQ

1) What is Nano Banana Pro in one sentence?

A production-focused AI image workflow for teams that need faster, more consistent commercial creative output.

2) Is it only for designers?

No. Marketers, operators, and founders can use it effectively with structured prompts.

3) Is it better than generic image tools?

For business workflows and repeatable campaign output, often yes.

4) Can it replace all design tools?

No. It accelerates production workflows; it does not replace every downstream design need.

5) Is it worth paying for?

If your output is frequent and revenue-linked, usually yes.

6) What is the biggest risk when adopting it?

Skipping process design and expecting tool-only results.

7) What metric should I track first?

Cost per approved asset (CPAA) and time to first publish-ready output.

8) What should I do before committing long-term?

Run a 7-day benchmark with your real briefs and compare old workflow performance.

Final Recommendation

Treat Nano Banana Pro as an operational system, not a novelty generator.

If your growth model depends on fast, repeatable commercial visuals, prioritize workflow fit and output economics over tool hype.

Next Step

- Test real output workflow: AI Image Generator

- Reuse structured templates: Nano Banana Pro Prompts

- Choose plan by workload fit: Pricing